Generating an answer is easy, synthesizing the perfect context is the actual engineering challenge of the next decade.

At the genesis of the Generative AI era, around late 2022, the world was captivated by the magic of models that could speak. However, this "magic" quickly hit a wall. Early users discovered that AI was plagued by three critical flaws: it was prone to vivid hallucinations, link to outdated information, and, despite its eloquence, could often be remarkably "dumb" when faced with complex reasoning. Perhaps most unsettling was how authoritatively convincing they could be in their stupidity, delivering blatant falsehoods with the unshakable confidence of a world-class expert.

Bridging this gap between "impressive" and "reliable" has since become one of the biggest challenges for AI engineers. We realized that raw power wasn't enough. Let’s take a closer look at the industry’s response to this crisis: a strategic journey from 2022 to today, shifting from raw capacity to the science of Context Management

Long Context Windows

Industry first turned to a straightforward solution: increasing the size of the "Context Window." By 2023, models evolved from processing a few pages to handling entire libraries in a single prompt, what we now call Long Context Windows.

We must look at the "engine" under the hood: the Transformer architecture. It revolutionized AI by using an "Attention" mechanism, allowing the model to weigh the importance of different words in a sentence, regardless of their distance.

Due to hardware constraints (slower GPUs and less VRAM), early language models were trained with tiny context windows, ranging from 512 to 2048 tokens (barely 2 to 3 pages of text). Recently, massive improvements in hardware and more efficient algorithms have pushed these boundaries to 32K, 100K, and even 1M+ tokens. But this "Window Race" created a paradox. While we can now fit an entire library into the AI's input, it remains unclear how these models actually process that data. A larger window doesn't mean a smarter brain; it often just creates a larger haystack where the "needle" of information is buried and easily ignored."

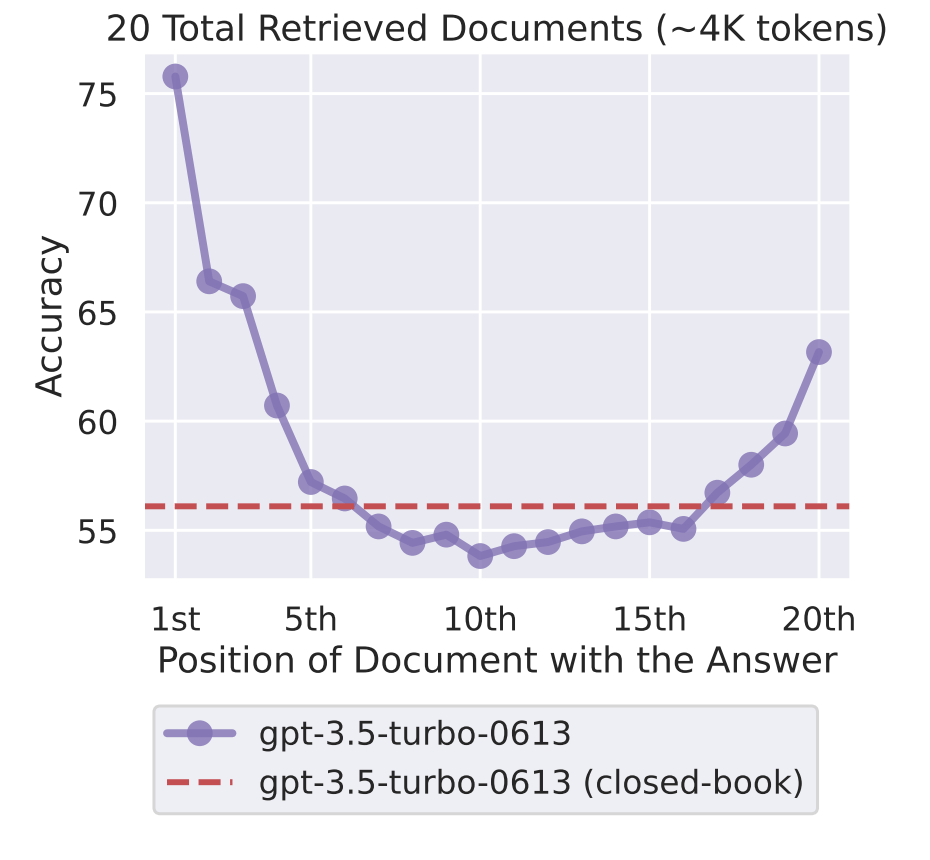

It led to a phenomenon known as "Lost in the Middle." As highlighted in research by Stanford, LLMs suffer from a significant U-shaped performance curve: they are excellent at recalling information from the very beginning or the very end of a document, but their accuracy drastically plummets for any data buried in the middle.

clearly demonstrates how model accuracy plunges in the center of the context window (near the 10th document) only to recover towards the end.

"Long Context" without management just became a performance nightmare. Every time you asked a question, the model had to re-process the entire document from scratch, leading to prohibitive costs and latency. We call that “Re-crunching”, and it became clear that capacity without orchestration is just noise.

CAG (Cache-Augmented Generation)

is the architectural breakthrough that solves this. By using Context Caching to freeze the mathematical state of the data, CAG transforms the Long Context from a slow, expensive archive into a real-time, high-performance reasoning engine.

Deep Dive: How Context Caching Works

we rely on Context Caching. Here is the "Write Once, Read Many" logic that is disrupting AI economics:

Mathematical Persistence: pre-load a large corpus (up to 2M+ tokens), the model processes it and stores the resulting "mathematical state" directly into the model's KV Cache (RAM).

TTL (Time To Live): This cache remains persistent in the GPU memory for a defined duration (typically 1 hour or more).

Cost Efficiency: As long as the cache is active, you only pay for the initial processing. Every subsequent question is answered without paying to "re-read" the data.

You don't need to be a data scientist to master this logic. You can start managing context today at a personal level. Regardless of the AI model you use, most advanced interfaces now allow you to populate a "system cache" or "persistent instructions."

For instance, I use this approach to anchor my own AI interactions: by caching my specific career objectives and the hard skills I aim to master, I ensure that every answer the AI generates is filtered through my personal growth strategy. Instead of receiving generic advice, I get insights that are architected to move me closer to my goals.

Whether you are managing a 2,000 page technical manual or a 50 word list of career milestones, the principle remains the same: the quality of the output is a direct function of how you curate the active context.

RAG (Retrieval-Augmented Generation)

“ Even the most expansive desk has its limits. When the mission requires crossing terabytes of history or real-time shifting data, we must call upon the Librarian”

RAG isn't a single action : it is a two phase engineering cycle designed to filter the universe of data into a single, context package.

Phase I: The Offline Infrastructure (Preparation)

Parsing & Logical Analysis: The system doesn't just ingest text; it analyzes the document's DNA titles, headers, and hierarchical structures to understand how information is organized.

Tree Building: We create a "Knowledge Tree" where every fragment is linked to its "Parent" (section) and "Neighbors" (related paragraphs). This ensures we never lose the logical thread of a document.

Vector Indexing: Each fragment is enriched with metadata and converted into a Vector (a mathematical coordinate) stored in a high-speed database like Pinecone.

To put it simply, this preparation phase is the primary defense against hallucinations. By using vectors, the system enables the LLM to understand that terms like "bicycle" and "bike" represent the same concept. This is the foundation of what we call Semantic RAG, allowed by vector databases, which is a deep field that warrants its own dedicated article. In the meantime, you can watch this interview with Edo Liberty, CEO of Pinecone, where he explains how his technology powers modern AI.

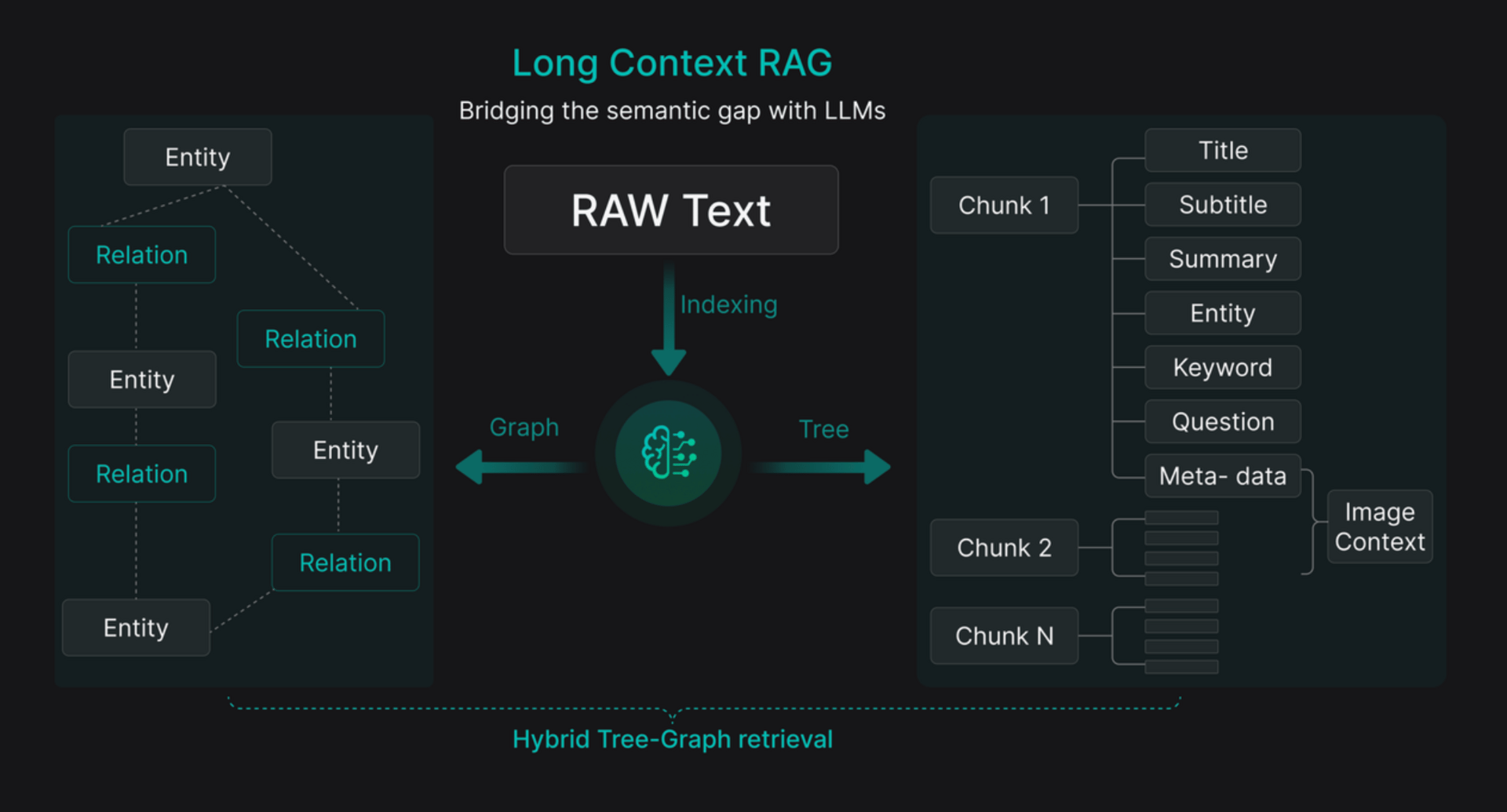

Here is an exemple of a Long Context RAG architecture relies on Indexing RAW Text to bridge the semantic gap between raw data and model understanding. The system transforms content into a Graph mapping Entities and their Relations, as well as a Tree organizing each Chunk with its Meta-data (Title, Summary, Keywords, Question). This approach enables intelligent navigation through knowledge to extract evidence that is both precise and structurally complete.

Phase II: The Online Chronogram (Semantic Search)

When a user asks a question, the RAG engine triggers a high-speed retrieval sequence. This is the "Freshness Layer" of the architecture:

Vectorization (T+200ms): The question is translated into the same mathematical language as the library.

The Semantic Scan (T+100ms): The system performs a "Locate" operation by comparing the query's vector of the question to the billions of vectors created during the Offline phase.

Dynamic Assembly (T+1000ms): Instead of delivering fragmented bits of text, the system uses the Knowledge Tree to Expand the context. It retrieves the "Parent" and "Neighbors" of the found fragment to provide a logically complete package.

This Online layer is what prevents the AI from becoming a relic of its training date. To make it more visual for you, consider the discovery of Saturn’s moons :

A model trained in 2022 is convinced Saturn has 82 moons.

Static databases updated in early 2023 might mention 145.

But by triggering a Dynamic Search during the Online phase, the system captures a scientific discovery made just hours ago.

By injecting this "hot" data into the reasoning loop, the RAG transforms the AI from a static encyclopedia into a living consciousness, capable of verifying facts in real-time before delivering its answer.

Strategic Alliance : why Entreprises are doing Both

However, a significant hurdle remains: standard RAG is often too slow (~1.5s) for high-frequency needs, necessitating the use of specialized LPU hardware (like Groq) and the strategic caching orchestration we have discussed. Its position as critical infrastructure remains unshaken, forming the robust foundation for enterprise intelligence."

Still within the logic of cost optimization, leading organizations already unify these systems: RAG sources the data while CAG stores it for the session, combining scale with speed. This orchestration is powered by "Triggers”.

Think of a Trigger as an intelligent alarm: the moment the system realizes the information in its active cache is incorrect or incomplete, it instantly calls the librarian (RAG) to refresh its memory. Without a trigger, an AI is either stuck in a frozen memory (leading to hallucinations if information is missing) or slow because it searches constantly (systematic RAG). We only spend energy (and money) on searching when we are certain the answer is not already there.

“ Reliability is the new gold standard. In the race for enterprise AI, the winners won't be those with the biggest models, but those with the most efficient Context Engineering pipelines.”