AI's insatiable need for electricity is draining global power grids and threatening the climate goals of Big Tech companies.

Most private companies don’t release the exact specs on energy consumption. So in order to understand the magnitude of demand in energy, we first need to understand what it takes to train and deploy one LLM and expand our scope to see the rest of the industry.

Case Study: Model GPT-4

A. Training phase

with 1.7 trillion parameters pre-trained on 13 trillion tokens, requiring a staggering 20 septillion FLOPS. To train this giant, OpenAI likely utilized up to 25,000 GPUs over a period of 3 months. These Nvidia chips (GPUs) are grouped into sets of eight in a specialized unit called an Nvidia HGX server. One HGX server consumes between 3 to 6 kW.

Keep in mind that GPT-4 is too deep to fit on one HGX server. It has about 120 layers. These are divided into pipelines, 15 pipelines to be exact, each pipeline running on an HGX server.

Calculation: 15 HGX servers x 8 GPUs = 120 GPUs needed just to hold one instance (layers of the model)

120 GPUs allow the model to run, but not to learn fast enough. To do so, Open AI has to scale up in Data Parallelism: they replicate this '120-GPU snake' hundreds of times to process different batches of data simultaneously.

The Scale Up: 200 copies x 120 GPUs ≈ 25,000 GPUs running at full throttle. ×

Tech-friendly Analogy : Imagine you have a 13-trillion-page book to memorize. Instead of having a single student (the 120 GPU cluster) read the entire book, you hire 200 identical students and assign each one a different chapter.

All students read at the same time (simultaneously).

Each 'replica' processes its own batch of data.

At the end of a session, they pool their notes: we average their findings to adjust the global model's parameters.

Now that we understand why we need this much hardware, let’s get to the core of the article: Energy

To power these 25,000 GPUs, we need approximately 3,125 HGX servers (since each holds 8 chips). With each server consuming around 6.5 kW continuously for the 90 days of training, the math becomes dizzying: 3,125 servers running 24/7 results in a total consumption of about 44 GWh. (3,125 × 6.5 × 24 × 90)

That is equivalent to the monthly electricity consumption of a small city of 50,000 people

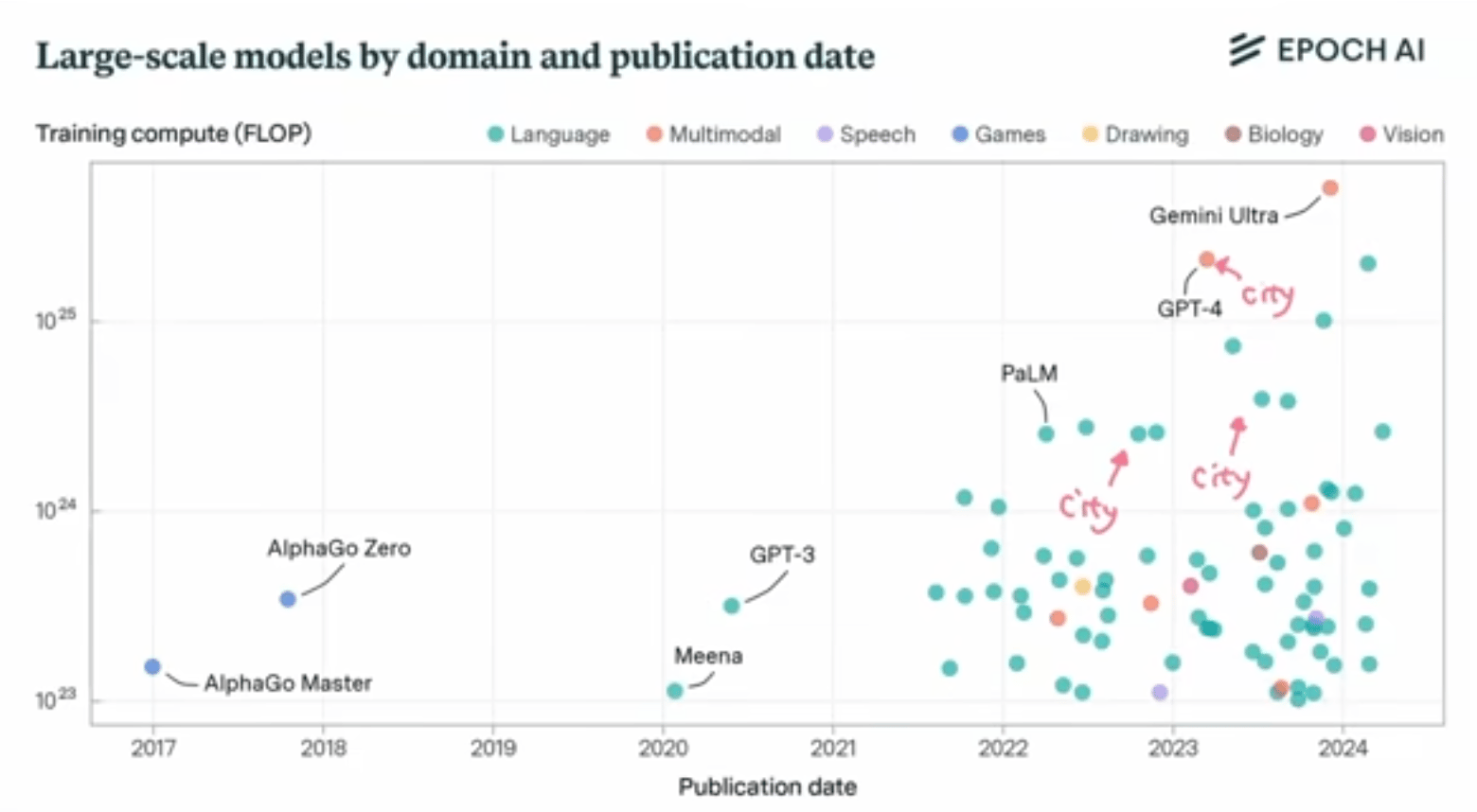

If you think about it from this graph, each point representing a major model can easily represent an energy spend equivalent to that small city's monthly consumption.

Keep in mind that the HGX server we discussed is already considered older hardware. The industry is shifting to a new architecture called Blackwell NVL72, which involves ~72 GPUs acting as a single unit. However, as efficiency increases (and the marginal cost of training decreases), AI companies unfortunately haven't settled for saving energy. Instead, they have taken advantage of this efficiency to train even larger models.

B. Deployment Phase

Training is just the tip of the iceberg. The real energy sink is Inference (using the model). While training happens once per model, inference happens millions of times every day.

To understand the scale, let's look at the projections. OpenAI is projected to reach 700M users generating 2.5 billion prompts a day. Namely, a Google search consumes roughly 0.3 Wh while a ChatGPT query consumes about 2.9 Wh. Asking an AI is roughly 10 times more energy-intensive than a traditional search.

Calculation: 2.5 billion prompts x ~2.9 Wh = 7.25 GWh per day.

In 30 days: This amounts to ~217 GWh.

To put it in perspective, running ChatGPT for just one month consumes as much electricity as a city of 250,000 inhabitants

However, this consumption must be weighed against the potential gains. Bill Gates, founder of Breakthrough Energy and one of the world's largest investors in green technology, argues in this Bloomberg interview that AI will eventually 'pay for itself.' His logic is that AI is not just a consumer of electrons, but a catalyst for efficiency. By accelerating Material Science discoveries (e.g., creating solar cells without silicon) and optimizing complex power grids in real-time, the 'intelligence' produced will ultimately save more energy and carbon than the data centers themselves consume.

A Geopolitical Energy race

In 2023, data centers represented 4.4% of total US electricity consumption, projected to reach 8-10% by 2030. Whether the projection is right or wrong , one thing is certain: we are going to need more power. To ensure the necessary amount of energy, Tech Giants are becoming energy companies

xAI bought methane turbines for its "Colossus" facility.

OpenAI is planning the "Stargate" facility (5 GW capacity).

Meta and Google are building natural gas plants or expanding hyperscale centers, but face local government pushback and lawsuits.

China: Driven by the state, allowing them to sidestep bureaucracy.

installed over 600 GW of solar and 440 GW of wind in 2023.

They have 27 nuclear reactors under construction.

The Economic Logic ( Why them ?)

It boils down to two strategic assets that only Big Tech possesses: Margins and Sovereignty. Unlike traditional industries operating on razor-thin margins, Tech giants enjoy 60-70% gross margins, giving them the unique financial buffer to absorb the 'Green Premium' of clean energy.

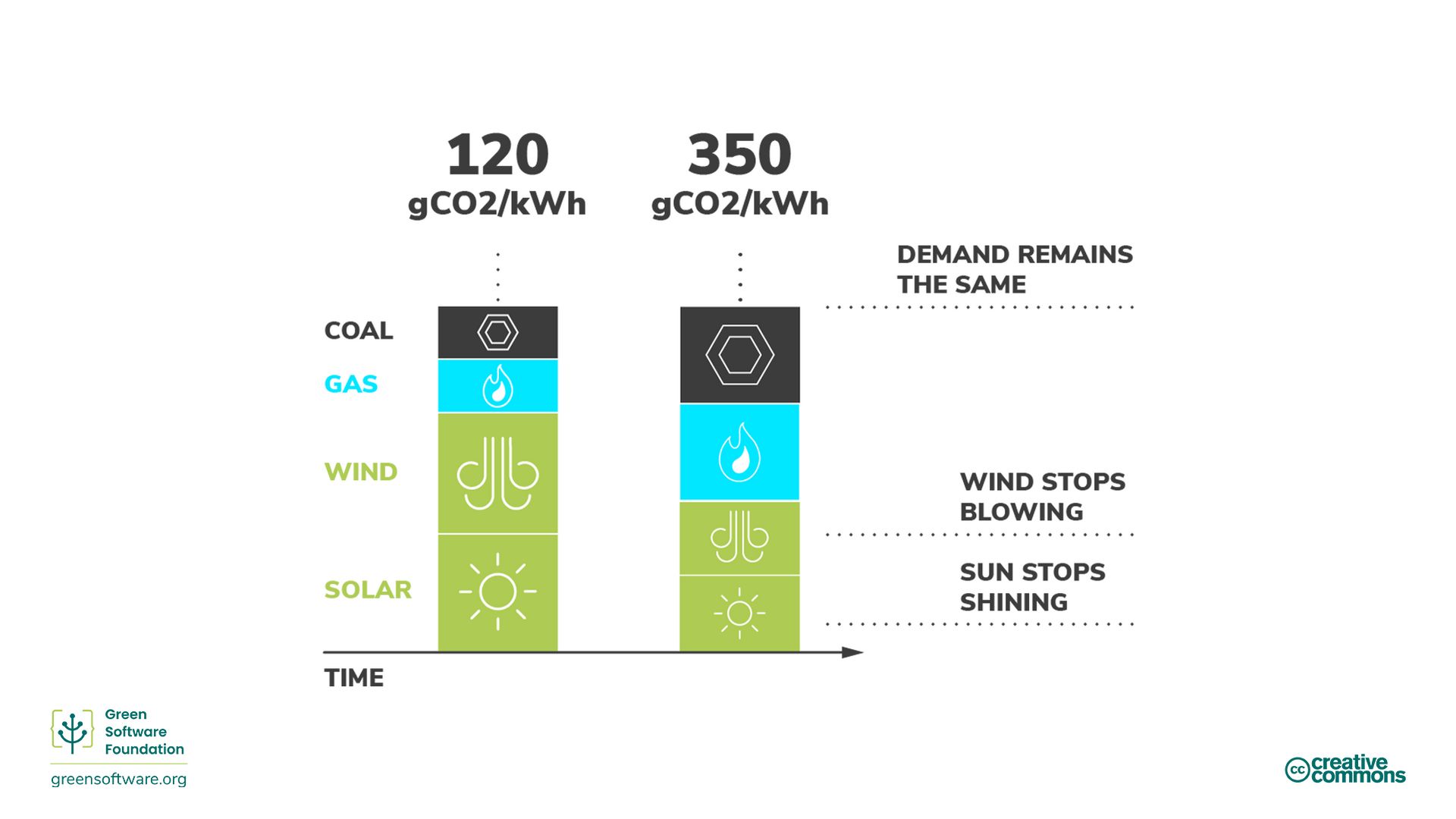

But more importantly, whether by investing in SMRs (Small Modular Reactors) or buying plants, they aren't just trying to be 'Low-Carbon', they are seeking independence. A continuous 24/7 energy that solar or wind alone cannot guarantee without massive battery storage, effectively immunizing their operations against the instability of aging public power grids. After all, a cloud outage (no matter how brief) can result in losses counted in the millions for these companies.

"Energy is a critical driver of AI innovation, and Tech companies are aware of this."

Era of GreenOps

Before diving into the tools, we must address the mindset. GreenOps is not a passive feature you activate: it is a shared responsibility. Every tech professional has a duty to consider the energy impact of their code or feature requests. Conversely, it is the company's duty to provide the necessary tools, dashboards, and culture to make these invisible costs visible. You cannot optimize what you cannot measure.

A. Carbon-Aware Computing (Playing with Time and Space)

This involves programming software to be "smart" about the power grid's status. The first method is Time Shifting: this means delaying heavy, non-urgent computations (like model training or batch processing) to specific times when the grid is powered by renewables—such as windy nights or sunny afternoons—rather than relying on coal-heavy peak hours.

The second method is Space Shifting, which moves the workload geographically. The logic is simple: Why compute in hot Virginia, where massive air conditioning is required, when you can route the data to a data center in Finland (benefiting from natural cooling) or Quebec (running on hydroelectric power)?

B. Right-Sizing "The Ferrari Fallacy"

Using a massive model like GPT-5 just to summarize a simple email is a fundamental error. It’s like driving a Ferrari to buy a baguette: spectacular, but wastefully inefficient. This realization is driving a massive strategic shift in Big Tech toward SLMs (Small Language Models) like Mistral or Phi-3. These models are "good enough" for specific, everyday tasks but operate at a fraction of the energy cost of their giant counterparts.

Moonshot: Space-Based Data Centers (by Starcloud)

The concept is to launch AI data centers into orbit to tap into unfiltered, continuous solar energy (24/7), free from Earth's day/night cycles and weather disruptions. Startups like Lumen Orbit are betting on the plummeting launch costs (driven by SpaceX's Starship) to make this economically viable, especially for massive training runs where immediate latency is less critical than raw, continuous power availability.

Technical Constraints:

Thermodynamics (The Vacuum Problem): Without air, there is no convection. Heat must be dissipated purely through radiation, requiring massive and complex infrared radiators.

Radiation Hardening: Cosmic rays can flip bits in memory and corrupt models. A 3nm chip need heavy shielding (lead or water) or specialized, slower "hardened" architecture.

❝The next generation of tech leaders won't just be defined by their ability to scale intelligence, but by their ingenuity in powering it"