Last week, we saw how autonomous agents can execute complex tasks (Agentic Engineering). But for an agent to make the right decision, it must first understand the world it operates in. This is the challenge of the 'World Model' highlighted by AMI Labs.

The company is the brainchild of Yann LeCun, 65, who spent over 12 years at Meta, most recently as VP and Chief AI Scientist. He departed in November after growing disagreements with the direction of Meta’s AI strategy. It raised an exceptional $1.03 billion in funding on March 10, 2026, making AMI Labs the newest French unicorn. Individual investors include Jeff Bezos, former Google CEO Eric Schmidt, Mark Cuban, Xavier Niel, Tim and Rosemary Berners-Lee, and Jim Breyer.

Today's illusion

When a Large Language Model drafts a strategy, it feels like it genuinely understands the world. In reality, today's most advanced models are sophisticated autocomplete engines predicting the next word probabilistically. A toddler knows a dropped pen hits the floor, but an LLM only knows this if it ingested a string of text describing gravity. It lacks a foundational grasp of physical reality.

That’s why the adoption of AI by companies from an operational standpoint remains limited and risky. Today, a company cannot base its operations on probabilities, even if costs are lower; ensuring the quality of deliverables to the customer and clients today is priceless. To unlock reliable automation, the industry must move beyond predictive parrots and embrace "World Models": AI systems that internalize the deterministic rules of reality to reason, plan, and execute. Let’s take a closer look together at one of the most promising '‘World Model' architecture.

Joint Embedding Predictive Architecture (JEPA)

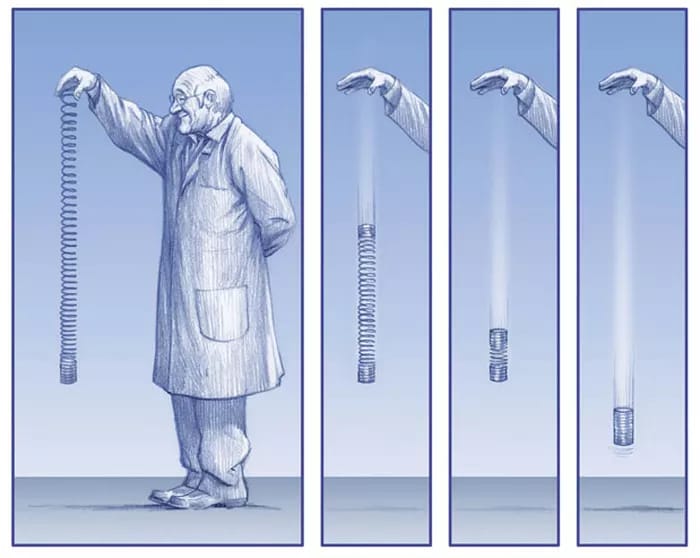

To understand JEPA (Joint-Embedding Predictive Architecture), it helps to think about how humans actually observe the real world.

Imagine you are watching someone throw a tennis ball across a yard. If an AI tries to predict what happens next using "pixel reconstruction," it literally tries to generate a perfect, high-resolution image of the next millisecond. It wastes massive amounts of computing power trying to guess the exact shadow on the ball, the glare of the sun, and the exact position of every leaf on a tree in the background.

LeCun has been saying this for years: predicting every pixel of the future is intractable in any stochastic environment. JEPA sidesteps this by predicting in a learned latent space instead. A generative model that reconstructs pixels is forced to commit to low-level details (exact texture, lighting, leaf position) that are inherently unpredictable. By operating on abstract embeddings, JEPA can capture "the ball will fall off the table" without having to hallucinate every frame of it falling.

Training phase

V-JEPA 2 is the clearest large-scale proof point so far. It's a 1.2B-parameter model pre-trained on 1M+ hours of video via self-supervised masked prediction — no labels, no text.

The training stage is where it gets interesting: just 62 hours of robot data from the DROID dataset is enough to produce an action-conditioned world model that supports zero-shot planning. The robot generates candidate action sequences, rolls them forward through the world model, and picks the one whose predicted outcome best matches a goal image. This works on objects and environments never seen during training.

The data efficiency is the real technical headline. 62 hours is almost nothing. It suggests that self-supervised pre-training on diverse video can bootstrap enough physical prior knowledge that very little domain-specific data is needed downstream.

That's a strong argument for the JEPA design: if your representations are good enough, you don't need to brute-force every task from scratch. They're targeting healthcare and robotics first, which makes sense given JEPA's strength in physical reasoning with limited data. Here is a Podcast with Yann Lecun for a concrete application of these world models in the healthcare sector.

Moravec's paradox is the observation that, as Hans Moravec wrote in 1988, "it is comparatively easy to make computers exhibit adult-level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility".

The Enterprise World Model

If JEPA teaches us anything, it’s that AI needs abstraction to understand the physical world. It cannot get swamped in the pixels. But when we look at how companies try to build AI agents today, they are doing exactly that: forcing LLMs to read the 'pixels' of the business: raw database tables, messy CRM logs, and disconnected APIs.

Enterprise data resides in a multitude of cloud warehouses, SaaS applications, on-premises databases, and operational systems. Each of these sources maintains its own schemas, terminology, and, most importantly, its own business logic. When AI agents lack comprehensive context regarding the nature of existing data, its physical location, and the precise way it should be interpreted, they invent table names, fabricate relationships between entities, and misapply business rules, all with remarkable confidence.

This is precisely where the semantic layer comes into play. By extracting raw data and transforming it into clear business concepts: such as revenue, churn rate, or active user: the semantic layer provides AI agents with the structured, deterministic understanding they absolutely need to plan, reason, and automate complex workflows without hallucinating.

There are plenty of ways to make an AI agent act deterministically rather than just probabilistically. However, I believe the approach described above is the most comprehensive and viable for many businesses today.

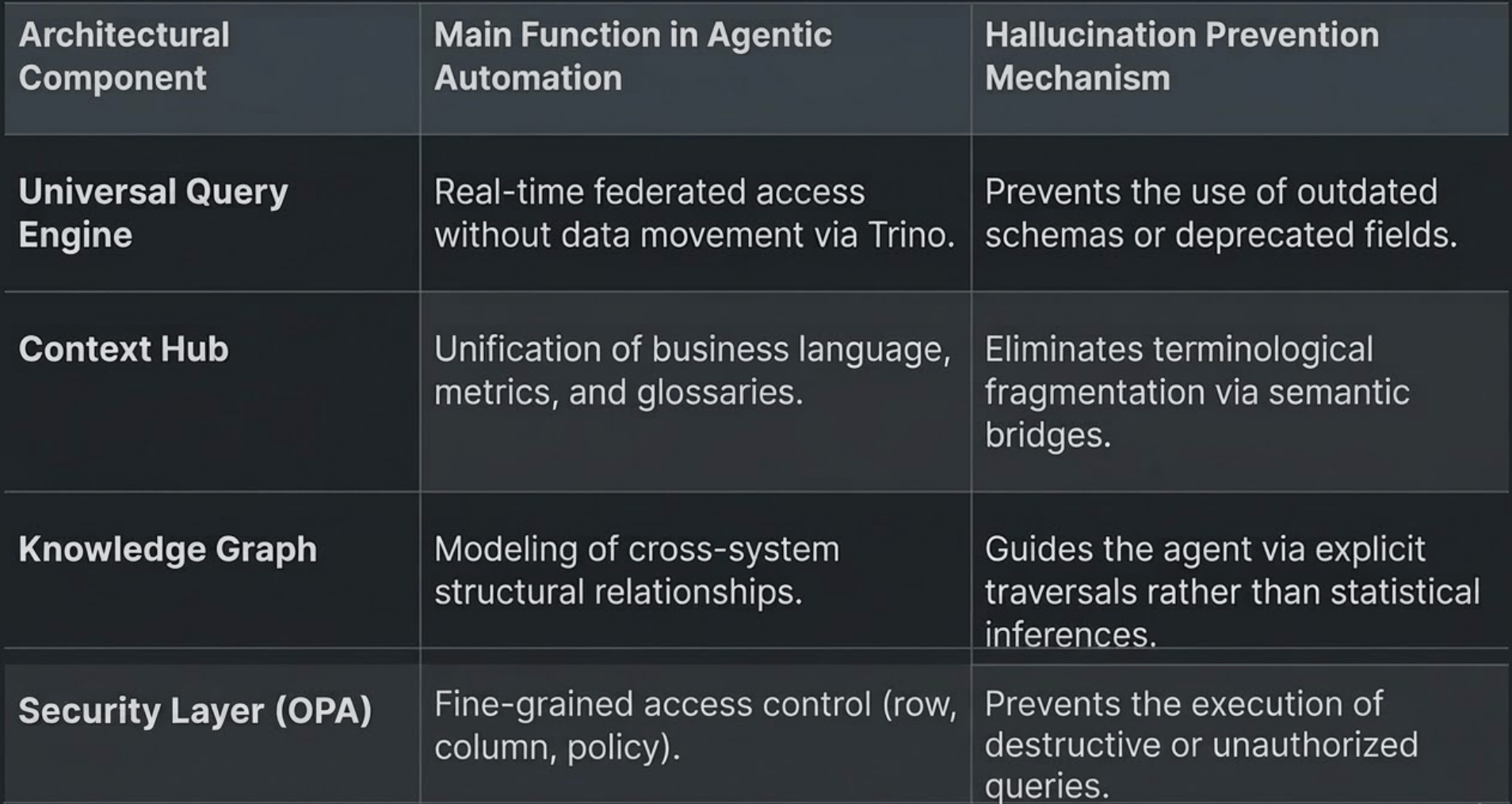

This system is built primarily on a universal query engine that, by bypassing traditional ETL pipelines, enables federated, real-time interaction with distributed data. It is further structured around a dynamic context hub, a single source of truth capable of unifying metadata and enriching ontologies through continuous learning*. At the heart of the process, agent-based orchestration goes beyond simple SQL code generation by integrating deep semantic validation via knowledge graphs, ensuring radically greater accuracy compared to probabilistic approaches.

*Here, “continuous learning” refers specifically to the enrichment of the knowledge graph: the AI adds new nodes or relationships to the database (e.g., “Project X belongs to Department Y”). This differs from machine learning, which orchestrates the entire process.

Keynote: For the AI or routing mechanisms to improve automatically, one essential component is missing from this picture: a telemetry and user feedback system (such as RLHF: Reinforcement Learning from Human Feedback). Successful requests, OPA security failures, and user corrections should be logged. This data could then be used to periodically fine-tune the model so that it better understands the company’s specific jargon over time.

You Were Playing a Game. Niantic Was Training an AI.

Who would have thought the world's largest AI training dataset would be Pokémon GO?

A physical “World Model” requires an understanding of spatial geometry, depth, lighting, and occlusion. It is impossible to send camera-equipped vehicles to every park, every pedestrian street, or every building around the world. Collecting spatial data on a large scale was a major bottleneck.

While millions of us were just walking around trying to complete the Pokédex, we unknowingly built a massive 30-billion image 3D map. Every PokéStop scan captured precise GPS, lighting, and camera angles with centimeter-level accuracy. Niantic is using all that crowdsourced data to power visual navigation for autonomous delivery robots. The greatest AI project in history was disguised as a mobile game a decade ago.